What for those who might look down and see your precise legs and arms inside VR, or have a look at different real-world folks or objects as for those who weren’t sporting a headset?

The staff at Imverse spent 5 years constructing this unbelievable expertise at universities in Switzerland and Spain. “We have been engaged on this earlier than Oculus was even created” says co-founder Javier Bello Ruiz. Now its real-time blended actuality engine is prepared for public demos, debuting this month at Sundance Movie Competition.

Imverse‘s tech has the facility to make VR appear far more plausible and simple to regulate to — which is crucial because the business tries to develop headset possession amongst mainstream patrons. The startup desires to turn out to be a foundational software program platform for the event experiences, like Unity or Unreal. However even when their commercialization stumbles, one of many VR giants would most likely love to purchase Imverse’s tech.

Under you may see my demo video of Imverse’s blended actuality engine from Sundance 2018:

Whereas there’s actually some pixelation, tough edges, and moments when the rendered picture is inaccurate, Imverse continues to be capable of ship the feeling of your actual physique present in VR. It additionally gives the bonus means to render different objects together with folks, permitting Ruiz to shake my hand whereas he’s in a VR headset and I’m not. That might be useful for bringing VR into houses the place members of the family would possibly must share the lounge with out knocking into folks or issues, particularly if somebody’s making an attempt to get your consideration when you may have a heaset and headphones on.

The primary expertise constructed with the real-time rendering is Elastic Time, which helps you to play with a tiny black gap. Pull it in near your physique, and also you’ll see your limbs bent and sucked into the abyss. Throw it over to a pre-recorded professor speaking about area/time phenomena, and his picture and voice get warped. And as a trippy finale, you’re eliminated out of your physique so you may watch the scene unfold from the third-person because the rendering of your actual physique is engulfed and spat out of the black gap.

“This collaboration got here out of an artist residency I did on the lab of cognitive neuroscience in Switzerland” says Mark Boulos, the artists behind the mission. “That they had developed their tech to make use of of their experiments and neuroprosthesis.”

Imverse’s volumetric rendering engine each detects your place whereas additionally capturing what you seem like so that may be displayed in VR

Between microfluidic haptic gloves that allow you to really feel digital objects and sense warmth, and the psychedelic experiences like Requiem For A Dream director Darren Aronofsky’s galaxy tour Spheres, there was a lot to wow VR followers at Sundance. But Imverse is what caught with me. It unlocks a brand new degree of presence, which each VR expertise and gadget aspires to. Truly seeing your individual pores and skin and garments inside VR is a large step up from floating representations of hand controllers or trackers that merely present the place you're. You're feeling like a full human being slightly a disembodied head.

That’s why it’s so spectacular that the Imverse staff has simply 4 core members and has solely raised $400,000. It received an enormous headstart as a result of CTO Robin Mange has been specializing in volumetric rendering for 12 years. Ruiz explains that Imverse’s tech is “most likely his fifth or sixth graphics engine he’s created”, and that Mange had been making an attempt to construct a photorealistic atmosphere for neurological experiments, however needed so as to add notion of 1’s personal physique.

Imverse is now engaged on elevating a number of million in a Collection A to fund a presence in Los Angeles the place it’s working with content material studios like Emblematic Group. Ruiz says that may clear up one of many startup’s principal challenges, which is that in Switzerland, “you must first persuade folks that VR is necessary, after which that our expertise is best.”

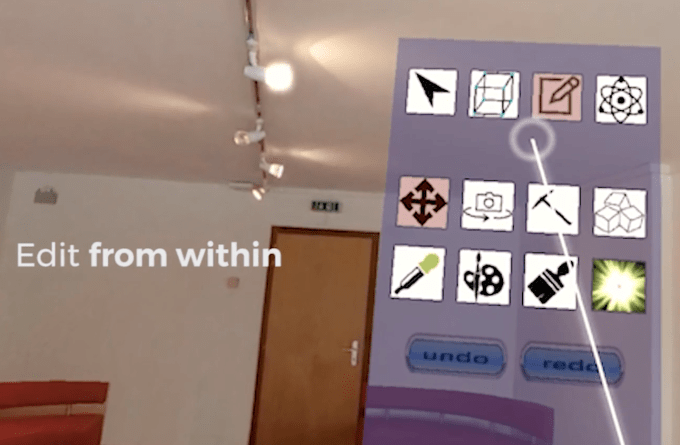

Within the meantime, Imverse is growing LiveMaker, which Ruiz calls a “Photoshop for VR” that provides a floating toolbox you need to use to edit and create digital experiences from contained in the headset. He says movie studios might use it to make VR cinema, but it surely might additionally assist out entrepreneurs, actual property firms, and even do mathematical simulations. Imverse’s earlier work allowed a single 360 picture to be changed into a VR mannequin of an area that might be explored or altered.

Imverse’s “LiveMaker” is sort of a Photoshop for VR

There’s loads of room for Imverse to make its blended actuality engine clearer and fewer uneven. The drifting pixels could make it really feel such as you’ve been haphazardly reduce out and caught into VR. But it nonetheless gave me a way of place, like I used to be simply in a special actual world with my physique intact slightly than in a completely make-believe existence. That might be key to VR fulfilling its destiny as an empathy machine, permitting us to soak up another person’s perspective by appearing out their life in our personal pores and skin.